Tech

Exclusive: Positron raises $230M Series B to take on Nvidia’s AI chips

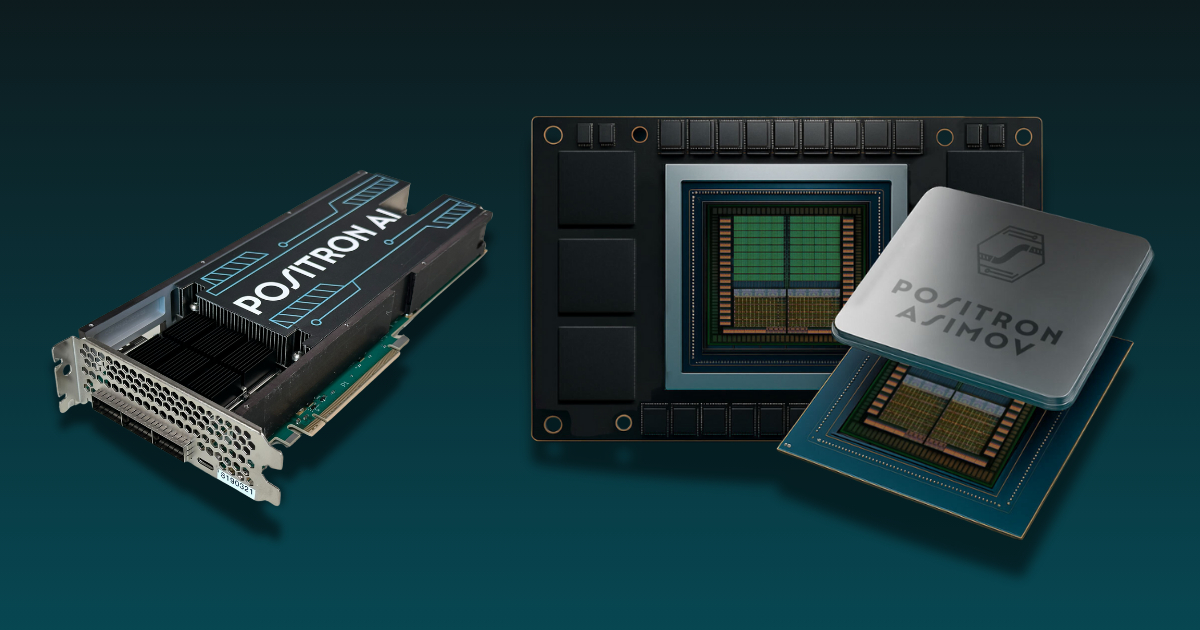

Semiconductor startup Positron has secured $230 million in Series B funding, TechCrunch has exclusively learned. The outfit plans to use the capital to speed up deployment of its high-speed memory chips, a critical component for the chips used for AI workloads, sources familiar with the matter told TechCrunch.

Investors in the round include Qatar Investment Authority (QIA), the country’s sovereign wealth fund, which has been increasingly focused on building out AI infrastructure, the sources said.

The Reno-based startup’s Series B comes as hyperscalers and AI firms push to reduce their reliance on longstanding leader Nvidia. These firms include OpenAI, which, despite being one of Nvidia’s largest and most important customers, is reportedly unsatisfied with some of the firm’s latest AI chips and has been seeking alternatives since last year.

Meanwhile, Qatar, through QIA, has been accelerating a broader push into so-called “sovereign” AI infrastructure – a priority repeatedly underscored at Web Summit Qatar in Doha this week. Several sources told TechCrunch the country views compute capacity as critical to staying competitive on the global economic stage, and is positioning itself as a leading AI services hub in the Middle East, fueling interest in startups like Positron.

The strategy is already taking shape through major commitments, including a $20 billion AI infrastructure joint venture with Brookfield Asset Management that was announced in September.

Positron’s fundraise brings the three-year-old startup’s total capital raised to just over $300 million. The startup previously raised $75 million last year from investors including Valor Equity Partners, Atreides Management, DFJ Growth, Flume Ventures and Resilience Reserve.

The company claims its first-generation chip, Atlas, manufactured in Arizona, can match the performance of Nvidia’s H100 GPUs for less than a third of the power. Positron is focused on inference – computing needed to run AI models for real-world applications – rather than training large language models, positioning the company as demand surges for inference hardware as businesses increasingly shift focus from building large models to deploying them at scale.

Techcrunch event

Boston, MA

|

June 23, 2026

Sources tell TechCrunch that beyond its memory capabilities, Positron’s chips also perform strongly in high-frequency and video-processing workloads.

TechCrunch has reached out to Positron for more information.

Tech

Databricks bought two startups to underpin its new AI security product

With an overflowing war chest from its $5 billion raise that closed last month (not to mention billions in revenue), Databricks is acquiring.

The company, best known for its cloud data analytics platform, announced on Tuesday that it was launching a new security product called Lakewatch. Lakewatch takes Databricks’ ability to store massive amounts of data and performs classic Security Information and Event Management (SIEM) tasks, like detecting and investigating threats. Only it does so with the help of AI agents powered by Anthropic’s Claude.

Databricks bought two startups to underpin this new product: Antimatter, in an undisclosed-until-now deal that closed last year, and SiftD.ai, in a deal that flew together over the last couple of weeks and closed on Monday, the company told TechCrunch.

Terms were not disclosed for either deal. Antimatter, founded by security researcher Andrew Krioukov, raised $12 million led by New Enterprise Associates in 2022, according to PitchBook estimates. If tiny SiftD.ai had raised money, PitchBook wasn’t aware.

SiftD.ai was so young, it had only launched its product in November: an interactive notebook (like a Jupyter notebook) intended to be a tool where people and agents worked together. The Databricks team knew the startup’s co-founder CEO Steve Zhang from his many years as chief scientist at Splunk (through 2021). He created the Search Processing Language while there. (His LinkedIn also says he was CTO of Astronomer, of the Coldplay CEO scandal, but left there in 2023 before founding SiftD.)

Both of these acquisitions were of small startups — only a few people in SiftD’s case and less than 50 for Antimatter, according to LinkedIn. SiftD appears to be an acqui-hire. With Antimatter, Databricks probably gained some IP, too. Krioukov had demonstrated Antimatter’s tech onstage in 2024 at RSA’s Innovation Sandbox Contest. Antimatter was working on a “data control plane” tool that allowed enterprises to deploy agents securely, while protecting sensitive data.

While Databricks declined to say how many employees it acquired, it confirmed that the startups’ employees did join the company. Krioukov, who’s been at Databricks for months now, is leading the Lakewatch team.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

We asked Databricks if it was going to keep shopping for startups and a spokesperson essentially said, yes, that it continuously has its feelers out. “We’re always looking to what’s next — our goal is to stay ahead of the market and close gaps in what our customers need,” the spokesperson said.

Tech

Spotify tests new tool to stop AI slop from being attributed to real artists

At a time when AI slop is flooding music streaming platforms, Spotify is beta testing a new “Artist Profile Protection” feature that allows artists to review releases before they go live on their profiles. The idea behind the new tool is to give artists more control over which tracks are associated with their name on the streaming service.

“Music has been landing on the wrong artist pages across streaming services, and the rise of easy-to-produce AI tracks has made the problem worse,” Spotify wrote in a blog post. “That’s not the experience we want artists to have on Spotify, and that’s why we’ve made protecting artist identity a top priority for 2026. Today, we’re announcing a first-of-its-kind solution to a problem that’s affected streaming for years.”

Artists in the beta have the ability to review and approve or decline releases delivered to Spotify. Only the releases that they approve will appear on their artist profile, contribute to their stats, and show up in users’ recommendations.

Spotify’s announcement comes a week after Sony Music said that it has requested the removal of more than 135,000 AI-generated songs impersonating its artists on streaming services.

Spotify says that while open distribution has made it easier for independent artists to release music, it also creates opportunities for mistakes and bad actors. Tracks can end up on the wrong artist’s profile due to metadata errors, confusion between artists with the same name, or malicious attempts to attach music to an artist’s profile.

“When that happens, it can impact your catalog, your stats, your Release Radar, and how fans discover your music,” Spotify explains. “We know how frustrating this can be for both artists and fans alike and one of the top requests we’ve heard from artists over the past year is that you want more visibility before music appears under your name.”

Spotify notes that while the new feature isn’t necessary for every artist, it’s designed for artists who have experienced repeated incorrect releases, have a common artist name, or want more control over what appears on their profile.

Artists who are included in the beta will see the feature in their “Spotify for Artists” settings on desktop and mobile web. If they turn “Artist Profile Protection” on, they’ll receive an email notification when music is delivered to Spotify with their name attached to it. From there, they can approve or decline the request.

Tech

Anthropic hands Claude Code more control, but keeps it on a leash

For developers using AI, “vibe coding” right now comes down to babysitting every action or risking letting the model run unchecked. Anthropic says its latest update to Claude aims to eliminate that choice by letting the AI decide which actions are safe to take on its own — with some limits.

The move reflects a broader shift across the industry, as AI tools are increasingly designed to act without waiting for human approval. The challenge is balancing speed with control: too many guardrails slows things down, while too few can make systems risky and unpredictable. Anthropic’s new “auto mode,” now in research preview — meaning it’s available for testing but not yet a finished product — is its latest attempt to thread that needle.

Auto mode uses AI safeguards to review each action before it runs, checking for risky behavior the user didn’t request and for signs of prompt injection — a type of attack where malicious instructions are hidden in content that the AI is processing, causing it to take unintended actions. Any safe actions will proceed automatically, while the risky ones get blocked.

It’s essentially an extension of Claude Code’s existing “dangerously-skip-permissions” command, which hands all decision-making to the AI, but with a safety layer added on top.

The feature builds on a wave of autonomous coding tools from companies like GitHub and OpenAI, which can execute tasks on a developer’s behalf. But it takes it a step further by shifting the decision of when to ask for permission from the user to the AI itself.

Anthropic hasn’t detailed the specific criteria its safety layer uses to distinguish safe actions from risky ones — something developers will likely want to understand better before adopting the feature widely. (TechCrunch has reached out to the company for more information on this front.)

Auto mode comes off the back of Anthropic’s launch of Claude Code Review, its automatic code reviewer designed to catch bugs before they hit the codebase, and Dispatch for Cowork, which allows users to send tasks to AI agents to handle work on their behalf.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

Auto mode will roll out to Enterprise and API users in the coming days. The company says it currently only works with Claude Sonnet 4.6 and Opus 4.6, and recommends using the new feature in “isolated environments” — sandboxed setups that are kept separate from production systems, limiting the potential damage if something goes wrong.