Tech

Tiny startup Arcee AI built a 400B-parameter open source LLM from scratch to best Meta’s Llama

Many in the industry think the winners of the AI model market have already been decided: Big Tech will own it (Google, Meta, Microsoft, a bit of Amazon) along with their model makers of choice, largely OpenAI and Anthropic.

But tiny 30-person startup Arcee AI disagrees. The company just released a truly and permanently open (Apache license) general-purpose, foundation model called Trinity, and Arcee claims that at 400B parameters, it is among the largest open source foundation models ever trained and released by a U.S. company.

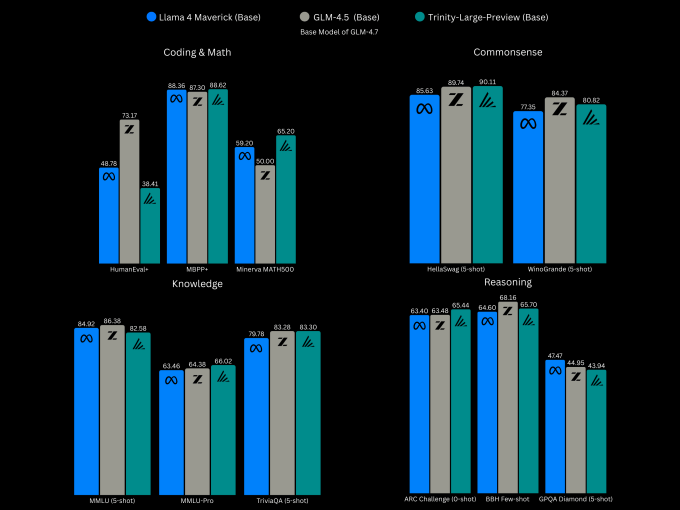

Arcee says Trinity compares to Meta’s Llama 4 Maverick 400B, and Z.ai’s GLM-4.5, a high-performing open source model from China’s Tsinghua University, according to benchmark tests conducted using base models (very little post-training).

Like other state-of-the-art (SOTA) models, Trinity is geared for coding and multi-step processes like agents. Still, despite its size, it’s not a true SOTA competitor yet because it currently supports only text.

More modes are in the works — a vision model is currently in development, and a speech-to-text version is on the roadmap, CTO Lucas Atkins told TechCrunch (pictured above, on the left). In comparison, Meta’s Llama 4 Maverick is already multi-modal, supporting text and images.

But before adding more AI modes to its roster, Arcee says, it wanted a base LLM that would impress its main target customers: developers and academics. The team particularly wants to woo U.S. companies of all sizes away from choosing open models from China.

“Ultimately, the winners of this game, and the only way to really win over the usage, is to have the best open-weight model,” Atkins said. “To win the hearts and minds of developers, you have to give them the best.”

Techcrunch event

San Francisco

|

October 13-15, 2026

The benchmarks show that the Trinity base model, currently in preview while more post-training takes place, is largely holding its own and, in some cases, slightly besting Llama on tests of coding and math, common sense, knowledge, and reasoning.

The progress Arcee has made so far to become a competitive AI Lab is impressive. The large Trinity model follows two previous small models released in December: the 26B-parameter Trinity Mini, a fully post-trained reasoning model for tasks ranging from web apps to agents, and the 6B-parameter Trinity Nano, an experimental model designed to push the boundaries of models that are tiny yet chatty.

The kicker is, Arcee trained them all in six months for $20 million total, using 2,048 Nvidia Blackwell B300 GPUs. This out of the roughly $50 million the company has raised so far, said founder and CEO Mark McQuade (pictured above, on the right).

That kind of cash was “a lot for us,” said Atkins, who led the model-building effort. Still, he acknowledged that it pales in comparison to how much bigger labs are spending right now.

The six-month timeline “was very calculated,” said Atkins, whose career before LLMs involved building voice agents for cars. “We are a younger startup that’s extremely hungry. We have a tremendous amount of talent and bright young researchers who, when given the opportunity to spend this amount of money and train a model of this size, we trusted that they’d rise to the occasion. And they certainly did, with many sleepless nights, many long hours.”

McQuade, previously an early employee at open source model marketplace Hugging Face, says Arcee didn’t start out wanting to become a new U.S. AI lab: The company was originally doing model customization for large enterprise clients like SK Telecom.

“We were only doing post-training. So we would take the great work of others: We would take a Llama model, we would take a Mistral model, we would take a Qwen model that was open source, and we would post-train it to make it better” for a company’s intended use, he said, including doing the reinforcement learning.

But as their client list grew, Atkins said, the need for their own model was becoming a necessity, and McQuade was worried about relying on other companies. At the same time, many of the best open models were coming from China, which U.S. enterprises were leery of, or were barred from using.

It was a nerve-wracking decision. “I think there’s less than 20 companies in the world that have ever pre-trained and released their own model” at the size and level that Arcee was gunning for, McQuade said.

The company started small at first, trying its hand at a tiny, 4.5B model created in partnership with training company DatologyAI. The project’s success then encouraged bigger endeavors.

But if the U.S. already has Llama, why does it need another open weight model? Atkins says by choosing the open source Apache license, the startup is committed to always keeping its models open. This comes after Meta CEO Mark Zuckerberg last year indicated his company might not always make all of its most advanced models open source.

“Llama can be looked at as not truly open source as it uses a Meta-controlled license with commercial and usage caveats,” he says. This has caused some open source organizations to claim that Llama isn’t open source compliant at all.

“Arcee exists because the U.S. needs a permanently open, Apache-licensed, frontier-grade alternative that can actually compete at today’s frontier,” McQuade said.

All Trinity models, large and small, can be downloaded for free. The largest version will be released in three flavors. Trinity Large Preview is a lightly post-trained instruct model, meaning it’s been trained to follow human instructions, not just predict the next word, which gears it for general chat usage. Trinity Large Base is the base model without post-training.

Then we have TrueBase, a model with any instruct data or post training so enterprises or researchers that want to customize it won’t have to unroll any data, rules, or assumptions.

Arcee AI will eventually offer a hosted version of its general-release model for, it says, competitive API pricing. That release is up to six weeks away as the startup continues to improve the model’s reasoning training.

API pricing for Trinity Mini is $0.045 / $0.15, and there is a rate-limited free tier available, too. Meanwhile, the company still sells post-training and customization options.

Tech

Aurora lands McLane deal to run driverless truck routes in Texas

Aurora Innovation will start hauling loads in driverless trucks for distribution giant McLane, the latest company to adopt the startup’s autonomous vehicle technology following a multi-year pilot program.

Under the commercial agreement announced Wednesday, trucks outfitted with Aurora’s self-driving system will be used to transport goods between Dallas and Houston. These trucks will operate autonomously and will not have a human safety driver on board who can take over. However, Aurora will still have what it describes as a “human observer” sitting in the cab — who does not operate the vehicle — per an agreement it has with truck manufacturer Paccar.

Aurora said it plans to expand to new routes between McLane distribution centers across the U.S. Sun Belt by the end of the year.

The companies launched a pilot program in 2023 using autonomous trucks with a human safety operator. The pilot eventually expanded to two round-trips daily between Dallas and Houston.

McLane recently approved moving to driverless operations, which now run seven days a week between the two Texas cities.

The companies are taking a novel approach to this route, using Aurora’s driverless tech for the long-haul portion of the trip before handing it over to a McLane truck driver who makes local deliveries to customers like fast food restaurants. Aurora said this handoff occurs at the company’s Dallas and Houston terminals located right off the freeway.

The commercial contract is the latest win for Aurora as it tries to transition from a developer of autonomous trucks to a commercial operator earning money on its driverless routes. And it comes a year after the company launched its commercial self-driving truck service in Texas. Since then, Aurora has landed a commercial agreement to haul frac sand for Detmar Logistics. Last month, Hirschbach Motor Lines agreed to buy 500 Aurora-powered trucks; that agreement, which is outlined in a memorandum of understanding, is expected to close later this year.

Today, the company operates driverless trucks — some with a human observer still in the cab — on routes between Dallas and Houston, Fort Worth and El Paso, El Paso and Phoenix, Fort Worth and Phoenix, and Laredo and Dallas.

Aurora reports its first-quarter earnings Wednesday after the markets close.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

Ethos raises $22.75M from a16z for its expert network with voice onboarding

When companies are looking for opinions or advice on a project, they tend to go to LinkedIn or use expert networks such as GLG, Third Bridge, or Alphasights. But they often don’t find quality inputs, despite their searches.

Today, these sites ask experts to fill in a form based on their job title, which is then used to match them with companies in need of their help.

London-based Ethos thinks that AI can improve both sides of this experience. For experts, it offers voice-powered onboarding to ask a broader set of questions and get more data about their knowledge in various domains that their job titles don’t cover. For companies, Ethos can better match natural language queries posed by these organizations for their project, thanks to the wider range of data it has collected.

Ethos said that its voice-based onboarding and data allows it to answer complex client questions like, “Find me people who worked at a funded startup by A-grade investors solving for finance automation.”

Another example the startup gave was how a pharma company using its platform could search for doctors who specialize in a certain area, but who have also written papers on the subject or have an understanding of drug development.

Today, Ethos announced a $22.75 million Series A round led by a16z with participation from General Catalyst, XTX Markets, Evantic Capital, and Common Magic.

a16z’s Anish Acharya thinks that legacy platforms like LinkedIn and GLG only show shallow signals with job titles. He believes that Ethos captures different sub-specializations through its voice interview process with curated questions.

“I think voice is the original form of human communication. Most people, you know, most people don’t know how to write their story down in a very succinct, compelling, and accurate way. Voice is a big unlock for Ethos,” Acharaya told TechCrunch over a call.

How Ethos is scaling its network

Ethos was founded by James Lo and Daniel Mankowitz in 2024. Lo previously worked at McKinsey and later at Softbank, where he worked on the transformation of companies like WeWork and Arm. Mankowitz worked as an AI researcher at DeepMind, where he worked on YouTube’s video compression algorithm, Gemini, and the AlphaDev sorting algorithm.

Both founders arrived at tackling the problems of building an expert network from different angles. Lo always wanted to work on providing the right economic and employment opportunities to people. Mankowitz thought that the economy is a knowledge graph of people, companies, and products, and using the right algorithms, you can match these entities with each other.

“Traditional expert platforms almost purely focus on a mixture of job titles and job descriptions. What we observe is that most clients and most employers are not looking for a job title company. They’re looking for a specific skill and a specific capability. We also observed that, over time, looking for a skill and capability is going to gradually merge between the human economy and the agent economy,” Lo said.

Beyond the data provided by experts, Ethos also looks at other public sources like blogs and academic papers, along with social links to match companies with the right people.

The company also conducts interviews through its own platform using voice agents and extracts insights. Startups like Listen Labs and Outset already provide a way for companies to use conversational AI for interviews, offering some competition on this front. But Ethos thinks that its network of experts is better suited for certain clients than its competitors.

Ethos doesn’t name its client base, but said that top hedge funds, private equity firms, leading foundational AI labs, and enterprise consulting were already using its product. It’s taking 30% or more as a per-project fee from businesses, depending on the nature of the project. The company noted that it’s on track for “an eight-figure annualized revenue” but didn’t provide specific numbers.

It also didn’t say how many experts are on the platform, but said that roughly 35,000 people are joining each week. (Ethos sends invites to people whom they think can benefit from it.)

One challenge for the startup is growing an expert user base that’s relevant to its clients. The company said that AI labs’ spending money to map human talent has been helping its cause.

“Our perspective here is the AI labs have — are pointing a giant capital gun at every economically valuable occupation in the world. They’re trying to map out every profession. And so that’s an amazing tailwind for us,” Lo said.

He noted that these labs are building professional services in areas of law, health, finance, and management, so they would want all kinds of experts in these networks to build out their models and get feedback about their products and strategy.

The company has eight people on its team now, and its goal is to keep the team compact while scaling up.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

Apple to pay $250M to settle lawsuit over Siri’s delayed AI features

Apple has agreed to pay $250 million to settle a class-action lawsuit over how it marketed its AI features ahead of the launch of the iPhone 16. The Financial Times was the first to report the news.

The lawsuit alleged that Apple exaggerated the breadth of features Apple Intelligence would bring, which included a significantly upgraded version of its assistant, Siri. The complaint alleges that the company created the impression that advanced AI capabilities would be available to users sooner than they actually were. In particular, the plaintiffs allege that Apple overstated both the readiness and functionality of these features, particularly the promised improvements to Siri, which have yet to fully materialize.

As a result, the complaint claims, people who bought the iPhone 15 or iPhone 16 believed they were paying for cutting-edge AI tools that were not actually available at the time of purchase. The lawsuit framed this as false advertising, and says Apple’s marketing influenced buying decisions based on features that were incomplete or delayed.

Apple did not admit to wrongdoing in court, but has chosen to settle the case rather than continue with litigation. Under the proposed agreement, eligible U.S. customers who purchased the iPhone 15 or iPhone 16 between June 10, 2024 and March 29, 2025 could receive up to $95 per device.

Apple has been touting a more advanced version of Siri ever since it unveiled Apple Intelligence in 2024 during WWDC. The anticipated updates are expected to help Siri function more like modern AI chatbots such as ChatGPT or Claude. The upgraded experience is rumored to be powered by Google Gemini, though newer reports state the company’s next iPhone operating system may let users choose from a number of third-party large language models.

The settlement arrives ahead of Apple’s annual developer conference on June 8, when the company is expected to preview a version of its AI-enhanced Siri.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.